12.30.2011

Vitamin B-12 Deficiency May Lead to Cognitive Problems in Aging

>

Older people with low blood levels of vitamin B12 markers may be prone to brain shrinkage and cognitive problems, according to a recent study.

"These findings, while inconclusive, should be of interest to seniors and their families," said Alexis Barry, an R.N. and director of Services at HomeCare Options, a not-for-profit home care agency which for more than 57 years has provided services for northern New Jersey's frail and elderly. "They lend support to the contention that low levels of vitamin B12 may be a risk factor for brain atrophy and, as a result, cognitive impairment among the elderly."

The study, conducted by researchers at Ruth University Medical Center as part of the Chicago Health and Aging Project, involved 121 participants who had blood drawn to measure levels of B12 and B12-related markers that can indicate a B12 deficiency. Their memory and other cognitive skills were also measured. Four and a half years later, MRIs were taken of the participants' brains to measure brain volume and look for other signs of damage.

Researchers found that cognitive scores decreased for each increase of one micromole per liter of homocysteine, a marker of B12 deficiency. An earlier study conducted in the U.K. supported this outcome.

"It's probably too early to say that older people need to consider increasing their consumption of B12 levels through changes in their diet or take supplements in order to prevent cognitive problems," said Barry. "But, these findings are certainly of interest."

Heavy sources of vitamin B12 are foods that come from animals, including fish, meat – especially live – eggs, milk and poultry. Fortified breakfast cereals are also considered a good source.

Labels: B-12, Ruth-University, vitamins

12.29.2011

Scientists Assert That Some Diets Protect Aging Brains, Others Cause Harm

>

Human brains tend to shrink and become less nimble in old age, but healthier eating may slow the process.

A study of older adults in Oregon identified mixtures of nutrients that seem to protect the brain, and other food ingredients that may worsen brain shrinkage and cognitive decline.

Diets high in trans fats -- long known to harm the heart and blood vessels -- stood out as posing the most significant risk for brain shrinkage and loss of mental agility. People whose diets supplied them with an abundance of vitamins B, C, D, and E consistently scored better on tests of mental performance and showed less brain shrinkage than peers with lesser intake of those nutrients.

"Trans fats appeared the most detrimental to cognitive function and brain volume in our study," said lead author Gene Bowman, a naturopathic doctor and assistant professor of neurology at Oregon Health & Science University. "Levels of trans fat weren't that high in the blood, so it doesn't take that much."

Unlike previous studies, which have relied on questionnaires to estimate nutrient intake, the Oregon researchers directly measured levels in the blood. That makes the evidence stronger, although not as definitive as a controlled clinical trial. "We wanted to take recall ability out of the equation," Bowman said.

Researchers at OHSU and Oregon State University enlisted 104 of the women and men who have volunteered for the Oregon Brain Aging Study that began in 1989. Their average age was 87. All of them completed a battery of tests of memory and thinking skills, and 42 volunteers also had MRI scans to measure their brain volume.

Rather than focus on single nutrients, the researchers analyzed combinations of nutrients and how they related to brain health.

"We used statistical models that help us appreciate the interaction of nutrients," Bowman said. "There is never just vitamin E or vitamin B-12 circulating in the blood, there's 1,000s of molecules circulating there."

Measuring blood levels allowed researchers to account for important variables all at once, including differences in the way people metabolize food.

"Thus, nutrient biomarker patterns may more closely reflect what is available to brain tissues," said Christy Tangney of Rush University Medical Center and Nikolaos Scarmeas of Columbia University in a commentary on the study.

The Oregon researchers found two nutrient patterns that appeared to promote brain health: The BCDE pattern high in vitamins and antioxidants found in fruits and vegetables, and an omega-3 pattern high in the fatty acids found in fish. But the effect of omega-3 was only significant on one of the six tests of brain function after researchers took into account differences in blood pressure and depression, big risk factors for cognitive decline. The lack of a strong effect fits with a 2010 clinical trial in which fish oil supplements failed to slow the advance of Alzheimer's disease.

Labels: oregon-live, oregon-state, vitamins-b-c-d-e

12.24.2011

New Method of Diagnosing MCI and Alzheimers

>

Hope for early detection of Mild Cognitive Impairment may be around the corner with this new study.

Finnish scientists have identified metabolomic signatures for diagnosing patients with Alzheimer’s disease, as well as predicting which sufferers of mild cognitive impairment, or MCI, will go on to develop the disease.

Undertaken as part of the EU’s Predict AD research initiative, the project, which investigated markers in prospectively collected serum samples from more than 200 subjects, is one of the first large-scale efforts to discover metabolomic biomarkers for Alzheimer's, said Matej Oresic, a researcher at VTT Technical Research Centre of Finland and leader of the study.

Alzheimer’s disease is a significant area within proteomics research, with protein biomarkers seen as key to drug development, particularly with regard to selecting patient cohorts and monitoring therapy response during clinical trials (PM 12/2/2011). Indeed, in June a report commissioned by proteomics firm Proteome Sciences predicted that protein biomarkers for Alzheimer's disease will represent a cumulative $9 billion market over the next ten years (PM 6/3/2011).

Metabolomic biomarkers for Alzheimer’s have received significantly less attention, Oresic told ProteoMonitor, noting that “there are ongoing efforts, but not nearly as many as [there have been] in proteomics.”

While “there have been for some years [Alzheimer’s] studies looking at lipids — lipidomics, basically” — this work has “not been on a large scale, and developing a truly diagnostic model [using metabolomic markers] had not been done,” he said.

“Metabolomics is a relatively new field,” Oresic added. “I think people have been focused so much on proteomics for Alzheimer’s biomarkers because of the specific proteins [such as A-beta and tau] that have been so well studied” in models of the disease.

In the study, which was published in the current edition of Translational Psychiatry, the researchers used two-dimensional gas chromatography with time-of-flight mass spec on a Leco Pegasus 4D GC×GC-TOFMS instrument, as well as LC-MS on a Waters Acquity UPLC/Q-TOF Premier system to measure levels of 683 metabolites in 226 serum samples.

With this data, they performed a model selection via logistic regression analysis in multiple-cross validation runs to develop metabolite panels both for diagnosing Alzheimer’s and for predicting patient's progression from MCI to Alzheimer’s. The best diagnostic panel was selected in 248 of 1,000 cross-validation runs and consisted of three phosphatidylcholines along with ketovaline. The best panel for predicting progression was selected in 195 of 1,000 cross-validation runs and consisted of one phosphatidylcholine, an unidentified carboxylic acid, and 2,4-dihydroxybutanoic acid, which, Oresic noted, is responsible for the majority of this panel’s predictive power.

When combined with age, the diagnostic signature identified patients with Alzheimer’s with AUC of 0.81, with 67 percent sensitivity and 76 percent specificity. The predictive signature identified patients progressing from MCI to Alzheimer’s with AUC of 0.77, with 77 percent sensitivity and 70 percent specificity.

Protein biomarker studies led by University of Pennsylvania researchers Les Shaw and John Trojanowski have shown that measurements of A-beta and total tau protein levels in cerebrospinal fluid can predict progression from MCI to Alzheimer’s roughly 90 percent of the time (PM 6/11/2010) – better than the results obtained by the VTT team’s metabolomic panel. CSF is generally more difficult to obtain than serum, however, making desirable serum-based tests like that developed by Oresic’s team.

Adoption of CSF A-beta and tau as Alzheimer’s biomarkers has also been slowed by the difficulty of achieving good reproducibility across runs and across labs. Metabolomics approaches don’t eliminate this issue, Oresic said, but they do present a somewhat different set of challenges.

Mass spec-based proteomics has presented reproducibility issues in part due to the CVs associated with trypsin digestion and measurements of low-abundance analytes. Oresic said he was confident that standardizing mass spec-based quantitation of metabolomic biomarkers would not be a problem. Instead, he said, the challenge would come primarily from sample collection.

“In term of the quantitation, there shouldn’t be an issue [with reproducibility], but I think sampling is an issue,” he said. “Some metabolites are very sensitive to the treatment of samples — do they stay at room temperature or are they frozen immediately? Things like that.”

“That is something that would need to be investigated for each specific metabolite,” he added. “Some, like steroids, are very stable, but some are very sensitive. So the sampling and extraction part [of the workflow] really need to be carefully standardized and investigated to see how much variability we can expect.”

Metabolomic markers could prove useful in concert with proteomic markers, Oresic noted, a notion borne out, he suggested, by another Alzheimer’s study led by University of Gothenburg researcher Kaj Blennow and published contemporaneously in PLoS One.

That study, which Blennow undertook with researchers from biomarker detection firm Quanterix, used Quanterix’s Single Molecule Array technology to measure A-beta-42 levels in the serum of heart attack patients, finding that levels of that Alzheimer’s biomarker rose in subjects with severe hypoxia due to cardiac arrest.

The main biomarker in the VTT researchers’ predictive metabolomic profile — 2,4-dihydroxybutanoic acid — is also linked to hypoxia, Oresic noted. “They showed there is a link between hypoxia in the brain and what is going on with [A-beta-42] in the blood, and I think that is what we are picking up in our biomarkers, as well,” he said. “So it could be that in this early stage of Alzheimer’s lack of oxygen to the brain could be one of the initiating factors.”

“I think it’s still too early to tell, but there is potential for combing” metabolomic and proteomic markers, Oresic said. “Once we really know better what they are reflecting in terms of pathphysiology, it will be easier to think about the most optimal ways of combining them.”

He added that, “technologically, it shouldn’t be an issue,” noting that while in the Translational Psychiatry study his team used a GC/GC-TOF-MS instrument for measuring some small polar metabolites, this could also be done on an LC-MS system like those used for proteomics work.

The researchers now plan to run a validation study of their markers in a larger set of patients, as well as investigate them in CSF and biopsy samples from the original cohort, Oresic said. He added that he is involved in conversations with several large pharma firms regarding validation of the markers, but he declined to name any specific companies.

Labels: alzheimers, MCI, metabolomic, oresic

12.23.2011

Scientists Measure Dream Content

>

They did this with the help of lucid dreamers, people who become aware of their dreaming state and are able to alter the content of their dreams. The lucid dreamers were asked to become aware of their dream while sleeping in an MRI scanner and to report this “lucid” state to the researchers by means of eye movements. They were then asked to voluntarily “dream” that they were repeatedly clenching first their right fist and then their left one for ten seconds.

This enabled the scientists to measure the entry into REM sleep — a phase in which dreams are perceived particularly intensively — with the help of the subject’s electroencephalogram (EEG) and to detect the beginning of a lucid phase. The brain activity measured from this time onwards corresponded with the arranged “dream” involving the fist clenching.

From Kurzweil AI

New Research on Dreams and the Brain from the Max Planck Institute

A region in the sensorimotor cortex of the brain, which is responsible for the execution of movements, was actually activated during the dream. The coincidence of the brain activity measured during dreaming and the conscious action shows that dream content can be measured. “With this combination of sleep EEGs, imaging methods and lucid dreamers, we can measure not only simple movements during sleep but also the activity patterns in the brain during visual dream perceptions,” says Martin Dresler, a researcher at the Max Planck Institute for Psychiatry.

The researchers were able to confirm the data obtained using MR imaging in another subject using a different technology. With the help of near-infrared spectroscopy, they also observed increased activity in a region of the brain that plays an important role in the planning of movements. “Our dreams are therefore not a ‘sleep cinema’ in which we merely observe an event passively, but involve activity in the regions of the brain that are relevant to the dream content,” explains Michael Czisch, research group leader at the Max Planck Institute for Psychiatry.

Ref.: Martin Dresler, et al., Dreamed Movement Elicits Activation in the Sensorimotor Cortex, Current Biology, 2011;

Labels: kurzweil, max-planck, sensorimotor

12.21.2011

Cognitive Multi-Tasking an Inborn Skill?

>

Imagine you're a hockey goalie, and two opposing players are breaking in alone on you, passing the puck back and forth. You're aware of the linesman skating in on your left, but pay him no mind. Your focus is on the puck and the two approaching players. As the action unfolds, how is your brain processing this intense moment of "multi-tasking"? Are you splitting your focus of attention into multiple "spotlights?" Are you using one "spotlight" and switching between objects very quickly? Or are you "zooming out" the spotlight and taking it all in at once?

These are the questions Julio Martinez-Trujillo, a cognitive neurophysiology specialist from McGill University, and his team set out to answer in a new study on multifocal attention. They found that, for the first time, there's evidence that we can pay attention to more than one thing at a time.

"When we multi-task and attend to multiple objects, our visual attention has been classically described as a "zoom lens" that extend over a region of space or as a spotlight that switches from one object to the other," Martinez-Trujillo, the lead author of the study, explained. "These modes of action of attention are problematic because when zooming out attention over an entire region we include objects of interest but also distracters in between. Thus, we waste processing resources on irrelevant distracting information. And when a single spotlight jumps from one object to another, there is a limit to how fast that could go and how can the brain accommodate such a rapid switch. Importantly, if we accept that attention works as a single spotlight we may also accept that the brain has evolved to pay attention to one thing at the time and therefore multi-tasking is not an ability that naturally fits our brain architecture".

Martinez-Trujillo's approach in getting to the bottom of this long-standing controversy was novel. The team recorded the activity of single neurons in the brains of two monkeys while the animals concentrated on two objects that circumvented a third 'distracter' object. The neural recordings showed that attention can in fact, be split into two "spotlights" corresponding to the relevant objects and excluding the in-between distracter.

"One implication of these findings is that our brain has evolved to attend to more than one object in parallel, and therefore to multi-task," said Martinez-Trujillo. "Though there are limits, our brains have this ability."

The researchers also found that the split of the "spotlight" is much more efficient when the distractors are very different from the objects being attended. Going back to the very apt hockey analogy, Martinez-Trujillo explained that if a Montreal Canadiens forward is paying attention to two Boston Bruins in yellow and black, he'll have a more difficult time ignoring the linesmen, also wearing black, than if he was in a similar situation but facing two Vancouver Canucks with blue and green uniforms, easily distinguishable from the linesmen in black'.

In the next generation of experiments, the researchers will explore the limits of our ability to split attention and multi-task – looking more closely at how the similarity between objects affects multi-tasking limits and how those variables can be integrated into a quantitative model.

Labels: feng-gui, heat-map, martinez-trujillo, multi-tasking

12.20.2011

Low-Calorie Diet Influences Brain Aging

>

What is CREB1?

CREB1 is a protein molecule known to regulate a wide range of brain functions, including learning, memory and anxiety control. As people age, this protein's efficacy is reduced, leading to a decrease in cognitive function. The comprise of CREB1 protein molecules can also lead to degenerative illnesses such as Alzheimer's disease or Parkinson's.

What are the specifics of the study?

The study was conducted by researchers working through the auspices of the Catholic University of Sacred Heart in Rome, in collaboration with scientists at the Institute of Human Physiology, according to Medical Xpress. The study involved the observation and testing of mice.

Mice were fed a calorie-restricted diet, which involved consuming only 70 percent of what they would normally eat. This was done to try to isolate the mechanisms by which a low-calorie diet affects brain function, something that various studies and models had already proven to be the case.

Scientists already knew CREB1 was essential to cognitive function and the brain's aging process, so they decided to focus their efforts on what results the lack or reduction of that protein would provide. In addition to the typical mice fed the calorie-restricted diet, other mice were engineered to be completely devoid of CREB1.

What were the results?

The mice that had been genetically altered to lack CREB1 experienced the loss of cognitive function and onset of degenerative illnesses in the same manner obese mice have been shown to suffer. The calorie-restricted mice showed significant retention of their cognitive abilities, including memory. Those on the calorie-restricted diet that eventually developed degenerative illnesses generally did so at a much slower and less devastating rate of decline than their CREB1-deficient or obese peers, according to UKPA.

In addition, the calorie-restricted mice lived 30 percent to 40 percent longer than their counterparts. There were behavioral differences as well, with the calorie-restricted mice showing less aggressive behavioral tendencies. They also were less likely to develop physical ailments such as diabetes.

Labels: creb1, low-cal, low-calorie

12.17.2011

Antioxidants and the Brain - New Hope

>

When you cut an apple and leave it out, it turns brown. Squeeze the apple with lemon juice, an antioxidant, and the process slows down.

Simply put, that same "browning" process-known as oxidative stress-happens in the brain as Alzheimer's disease sets in. The underlying cause is believed to be improper processing of a protein associated with the creation of free radicals that cause oxidative stress.

Now, a study by researchers in the University of Georgia College of Pharmacy has shown that an antioxidant can delay the onset of all the indicators of Alzheimer's disease, including cognitive decline. The researchers administered an antioxidant compound called MitoQ to mice genetically engineered to develop Alzheimer's. The results of their study were published in the Nov. 2 issue of the Journal of Neuroscience.

According to the Alzheimer's Society, more than 5 million Americans currently suffer from the neurodegenerative disease. Without successful prevention, almost 14 million Americans will have Alzheimer's by 2050, accounting for healthcare costs of more than $1 trillion a year.

Oxidative stress is believed to cause neurons in the brain to die, resulting in Alzheimer's. Study author James Franklin, an associate professor of pharmaceutical and biomedical sciences, has studied neuronal cell death and oxidative stress at UGA since 2004.

"The brain consumes 20 percent of the oxygen in the body even though it only makes up 5 percent of the volume, so it's particularly susceptible to oxidative stress," said Franklin, coauthor of the study along with Meagan McManus, who received her Ph.D. in neuroscience from UGA in 2010.

The UGA researchers hypothesized that antioxidants administered unsuccessfully by other researchers to treat Alzheimer's were not concentrated enough in the mitochondria of cells. Mitochondria are structures within cells that have many functions, including producing oxidative molecules that damage the brain and cause cell death.

"MitoQ selectively accumulates in the mitochondria," said McManus, who is now studying mitochondrial genetics and dysfunction as a postdoctoral researcher at Children's Hospital of Philadelphia.

"It is more effective for the treatment to go straight to the mitochondria, rather than being present in the cell in general," she said.

Although he had not previously conducted research on Alzheimer's disease, Franklin was moved to approve McManus' research proposal to take his laboratory research in a more clinical direction in part because of her family's history with the disease. "Two of my grandparents had Alzheimer's disease, but they presented with it very differently. While my granddad often couldn't remember who we were, he was still the same soulful funnyman I'd always loved. But the disease changed my grandmother's mind in a different way, and turned her into someone we'd never known," said McManus.

"So the complexity of the disease was most intriguing to me. I wanted to know how and why it was happening, and more importantly, how to stop it from happening to other people," she said.

In their study, mice engineered to carry three genes associated with familial Alzheimer's were tested for cognitive impairment using the Morris Water Maze, a common test for memory retention. The mice that had received MitoQ in their drinking water performed significantly better than those that didn't. Additionally, the treated mice tested negative for the oxidative stress, amyloid burden, neural death and synaptic loss associated with Alzheimer's.

Labels: alzheimers, anti-oxidants, antioxidant

12.15.2011

CogniFit Shows Off Validated Instrument

>

A new study recently published in the field of neuroscience and neuromatics presents evidence to the efficacy of brain training in groups of senior-dwelling older adults.

This is good news for all in the nascent brain-training movement.

Labels: CogniFit

12.13.2011

Games Including Bingo Subject of New Research on the Brain

>

Games including Bingo used in new Cognitive Research:

In a paper published online in the journal Aging, Neuropsychology, and Cognition, a team of researchers from Boston University and Case Western Reserve University (Cleveland, Ohio) demonstrated how changing the way something looks – in this case, the cards in the game Bingo – can enhance performance in groups with subtle problems in vision. The paper, entitled "Bingo! Externally supported performance intervention for deficient visual search in normal aging, Parkinson's disease, and Alzheimer's disease," was published by the journal in a special issue on cognitive and motivational mechanisms compensating for the limitations in performance on complex cognitive tasks across the adult lifespan.

The researchers chose to investigate the game of Bingo because it is a popular and familiar leisure activity. Coincidentally, it requires using aspects of vision that have been shown to be impaired to varying degrees in normal aging and in the common neurodegenerative disorders of Parkinson's disease and Alzheimer's disease. Players generally try to keep track of multiple cards at once to increase their odds of winning, but this is made difficult by the fact that Bingo cards used in community games are rather small and faint in print. The researchers investigated whether players' Bingo performance could be improved by making the cards larger and the numbers on them bolder, and by decreasing the amount of cards played at one time.

Participants in the study were 19 healthy younger adults, 33 healthy older adults, 14 individuals with probable Alzheimer's disease, and 17 non-demented individuals with Parkinson's disease. The researchers found that increasing card size and decreasing visual complexity through reducing the number of cards to search resulted in improvements in performance by all groups. "It is basic and simple to increase the size and decrease the complexity of the visual aspects of an everyday task, yet it helped each of the groups we studied," said researcher Thomas Laudate, Ph.D., lead author of the study.

Participants with Alzheimer’s disease received additional benefit from increased the visual boldness of the numbers on the cards, which presumably compensated for the patients’ reduced contrast sensitivity. "This research helps show that those with Alzheimer’s disease have visual deficits that interfere with functioning but they can be helped by increasing the contrast, or boldness, of the things they see,"said Dr. Laudate. The director of the research team, Dr. Alice Cronin-Golomb, concurred. "We focus so much on memory impairments that we sometimes forget that older adults can have impairments in other domains, too, such as in vision. We can’t fix memory very well but we have a whole arsenal of techniques to improve vision, and with that comes an improved quality of life".

This study supports previous research from the authors that has shown that enhancing visual aspects of the environment improves the ability to engage in a wide range of vital everyday activities including reading, eating, taking medication and recognizing faces and objects, even in those with cognitive difficulties. The improved functioning observed in healthy elders and in those with Parkinson’s or Alzheimer’s suggests the value of external visual support as an effective, easy-to-apply intervention to compensate for visual impairments. It emphasizes the importance of educating caregivers and health care professionals regarding interventions to counteract these visual impairments and their effect on everyday activities, in order to promote social interaction and independence in the older members of our communities.

The authors are Thomas Laudate, Sandy Neargarder, Tracy Dunne, Karen Sullivan, Pallavi Joshi, and Alice Cronin-Golomb, all of Boston University Department of Psychology, and Grover C. Gilmore and Tatiana Riedel of the Mandel School of Applied Social Sciences at Case Western Reserve University, Cleveland, Ohio.

Labels: alzheimers, bingo, case-western-reserve, parkinsons

12.12.2011

Positive Effects of Video Games Featured in New Research

>

For the first time, the positive effects of computer games on thoughts, emotions and behaviour will be the subject of closer scrutiny by social psychologists. A total of three studies will explore how, to which extent and for how long cooperative gaming behaviour influences the personality of gamers positively. The project, funded by the Austrian Science Fund (FWF), will complete the current state of research on personality effects from computer games, which has previously been dominated by studies of negative consequences. The studies have the potential to offer significant ideas for analysing and reinforcing social skills in all age groups.

Scientists currently agree that violent video games increase aggressive tendencies. When it comes to cooperative games, i.e. games played in a team with other (human) players pursuing the same goal, however, the effects are less well known. In such games, the success of one player depends on the success of another player, and vice versa. Can these games influence the thoughts and emotions of players, as well as their cooperative behavior? A team of social psychologists will be looking for answers in a project funded by the Austrian Science Fund.

From first-person shooter to team player

Prof. Tobias Greitemeyer from the Institute of Psychology at the University of Innsbruck, who is in charge of the project, explains the background: "In two earlier pilot studies, we had already noted the positive effects of collective gaming. However, to validate these findings, we must conduct more extensive, longer studies. That's exactly what we'll be able to do now." The project, which is about to be launched, is divided into three distinctive studies seeking to answer different questions.

The first correlational study is set to investigate the magnitude and type of impact that cooperative video games have on prosocial cognition. Participants will be interviewed about their preferences and gaming habits. Subsequent word completion tasks will highlight the prevalence of cooperative thought patterns; these are considered to be an indicator of the tendency towards community-oriented behaviour. Surveys and so-called "dilemma tasks", in which participants have to make decisions in situations of social conflict, provide clues about their social value orientation. For example, prosocial gamers can be distinguished from competitive and individualistic ones. It is this distinction that points to important correlations between gaming behaviour and emotional attitudes.

Labels: greitemeyer, innsbruck, physorg, video-games

12.09.2011

Emotional Learning in the Brain

>

Photo Credit: Futurity.org

Scientists have found that the cortex plays an essential part in emotional learning.

The study, initiated by the Swiss researchers and published in Nature, constitutes ground-breaking work in exploring emotions in the brain.

Anxiety disorders constitute a complex family of pathologies affecting about 10% of adults. Patients suffering from such disorders fear certain situations or objects to exaggerated extents totally out of proportion to the real danger they present. The amygdala, a deep-brain structure, plays a key part in processing fear and anxiety. Its functioning can be disrupted by anxiety disorders.

Although researchers are well acquainted with the neurons of the amygdala and with the part those neurons play in expressing fear, their knowledge of the involvement of other regions of the brain remains limited. And yet, there can be no fear without sensory stimulation: before we become afraid, we hear, we see, we smell, we taste, or we feel something that triggers the fear. This sensory signal is, in particular, processed in the cortex, the largest region of the brain.

For the first time, these French and Swiss scientists have succeeded in visualising the path of a sensory stimulus in the brain during fear learning, and in identifying the underlying neuronal circuits.

What happens in the brain?

During the experiments conducted by the researchers, mice learnt to associate a sound with an unpleasant stimulus so that the sound itself became unpleasant for the animal.

The researchers used two-photon calcium imaging to visualise the activity of the neurons in the brain during this learning process. This imaging technique involves injecting a chemical indicator that is then absorbed by the neurons. When the neurons are stimulated, the calcium ions penetrate into the cells, where they increase the brightness of the indicator, which can then be detected under a scanning microscope.

Under normal conditions, the neurons of the auditory cortex are highly inhibited. During fear learning, a "disinhibitory" microcircuit in the cortex is activated: thus, for a short time window during the learning process, the release of acetylcholine in the cortex makes it possible to activate this microcircuit and to disinhibit the excitatory projection cells of the cortex. Thus, when the animal perceives a sound during fear learning, that sound is processed much more intensely than under normal conditions, thereby facilitating formation of memory. All of these stages have been visualised by means of the techniques developed by the researchers.

In order to confirm their discoveries, the researchers used another highly innovative recent technique (optogenetics) to disrupt the disinhibition selectively during the learning process. When they tested the memories of their mice (i.e. the association between the sound and the unpleasant stimulus), the next day they observed a severe deterioration in memory, directly showing that the phenomenon of cortical disinhibition is essential to the process of learning fear.

The discovery of this cortical disinhibitory microcircuit opens up interesting clinical prospects, and researchers can now imagine, in very specific situations, how to prevent a traumatism from establishing itself and from becoming pathological.

Labels: amygdala, cortex, disinhibit, futurity, learning-process, microcircuit

12.08.2011

Researchers Examine Benefits of Virtual Worlds

>

Photo Credit: Daily Galaxy

Virtual worlds can provide youths exclusive environments that can help them learn and negotiate skills which are used in real world settings, like organisational and cognitive skills, a new study has revealed.

Academics on the Inter-Life project developed 3D 'Virtual Worlds' (private islands) to act as informal communities that allow young people to interact in shared activities using avatars. The avatars are three-dimensional characters controlled by the participants.

Virtual Worlds offer the possibility of realistic, interactive environments that can go beyond the formal curriculum.

The project involved young people undertaking creative activities like film making and photography, and encouraged them to undertake project activities with the virtual environments.

The students had to learn to cope with many scenarios in their island, as well as participate in the online communities over several months.

Throughout the project, the researchers encouraged new forms of communication, including those used in online gaming.

"We demonstrated that you can plan activities with kids and get them working in 3D worlds with commitment, energy and emotional involvement, over a significant period of time," said lead researcher, Professor Victor Lally.

"It's a highly engaging medium that could have a major impact in extending education and training beyond geographical locations."

"3D worlds seem to do this in a much more powerful way than many other social tools currently available on the internet. When appropriately configured, this virtual environment can offer safe spaces to experience new learning opportunities that seemed unfeasible only 15 years ago."

The findings represent an early opportunity to assess the social and emotional impact of 3D virtual worlds. So far, there has been little in depth research into how emotions, social activities and thinking processes in this area can work together to help young people learn.

"This kind of 3D technology has many potential applications wherever young people and adults wish to work together on intensive tasks."

"It could be used to simulate training environments, retail contexts and interview situations - among many other possibilities. These virtual worlds have potential uses in education, and also a wide range of other social and academic applications," he added.

Labels: 3D, ally, avatars, islands, virtual-worlds

12.07.2011

Reversing Brain-Aging and New Research

>

New research suggests that a treatment to reverse cellular aging in the brain may be around the corner. For more than a decade, scientists have attempted to define conditions such as MCI whilst discovering a method to reverse the age-related changes that impact individuals. The extension of lifespans observed worldwide makes this breakthrough especially important.

The research has implied the following:

Drugs that affect the levels of an important brain protein involved in learning and memory reverse cellular changes in the brain seen during aging, according to an animal study in the December 7 issue of The Journal of Neuroscience. The findings could one day aid in the development of new drugs that enhance cognitive function in older adults.

Aging-related memory loss is associated with the gradual deterioration of the structure and function of synapses (the connections between brain cells) in brain regions critical to learning and memory, such as the hippocampus. Recent studies suggested that histone acetylation, a chemical process that controls whether genes are turned on, affects this process. Specifically, it affects brain cells' ability to alter the strength and structure of their connections for information storage, a process known as synaptic plasticity, which is a cellular signature of memory.

In the current study, Cui-Wei Xie, PhD, of the University of California, Los Angeles, and colleagues found that compared with younger rats, hippocampi from older rats have less brain-derived neurotrophic factor (BDNF) — a protein that promotes synaptic plasticity — and less histone acetylation of the Bdnf gene. By treating the hippocampal tissue from older animals with a drug that increased histone acetylation, they were able to restore BDNF production and synaptic plasticity to levels found in younger animals.

"These findings shed light on why synapses become less efficient and more vulnerable to impairment during aging," said Xie, who led the study. "Such knowledge could help develop new drugs for cognitive aging and aging-related neurodegenerative diseases, such as Alzheimer's disease," she added.

Labels: aging-brain, alzheimers, BDNF, Cui-Wei-Xie, journal-of-neuroscience

12.05.2011

Caffeine and Cognitive Performance Linked

>

This is because our body is subject to what we call the “circadian rhythm”, the ebb and flow of our “internal clock”. This internal clock determines when our nervous system is active and when our mind is alert. During those late nights, our body is primed to go to sleep.

It is not just our circadian rhythm that can affect our work performance. It is also common for many people to feel slow and lethargic during certain times of the day, especially after a heavy lunch.

This can lead to embarrassing situations, such as accidentally nodding off during an important meeting. This state of lethargy can sometimes be life-threatening, such as when we have to drive on the road in such a condition.

Stay alert at work!

Fortunately, there is coffee. Caffeine is said to be able to help improve certain aspects of our cognitive performance. In 2008, the International Food Information Council Foundation reviewed some studies and concluded that there is a consistent demonstration that caffeine can jmprove certain aspects of cognitive function, even among well-rested volunteers.

These effects are usually quite immediate, due to how caffeine helps our brain increase its ability to process new stimuli.

Along with its irresistible taste and aroma, coffee is indeed well-suited to improve our mood. Better mood means more enjoyment of our work!

At the office -

Whenever we feel sluggish at work and our concentration begins to stray, it is good to take a short break and let coffee revive us.

Dealing with repetitive tasks -

It is common to find ourselves performing repetitive tasks at work. It is easy to lose concentration during these moments, and such carelessness can give rise to poor quality work and even workplace accidents.

Breaking the monotony with a cup of coffee will help us remain alert, and hence, productive under such circumstances.

Labels: benefits-of-caffeine, caffeine-references

12.02.2011

Multi-Tasking: Can We Really Multi-Task?

>

New research from the Tinbergen Institute has asked this hypothetical question and arrived at the following initial conclusions:

We find that multitasking significantly lowers performance in cognitive tasks compared to a sequential execution. This suggests that the costs of switching, which include recalling the rules, details and steps executed thus far, outweigh the benefit of a ’fresheye’. Note that this effect differs from the one found by Coviello et al. (2010). In their model, every new task takes resources away from the other active tasks which are closer to being completed, and juggling more tasks consequently increases the average duration of task-completion. Our results show that multitasking is bad for productivity even if one is not concerned with average duration.

And:

We do not find any evidence for gender differences in the ability to multitask. Besides, the share of switchers is exactly the same for men and women and the average number of switches is higher for men. Thus, the results contradict the claims of Fisher (1999): if men think so much more linearly than women, why don’t they insist more on a sequential schedule? Moreover, why is it that women do not adapt better to multitasking than men when forced to alternate? In sum, the view that women are better at multitasking is not supported by our findings.

Read more here and here

Labels: multi-tasking, tasks-women-and-men, tinbergen, wired

12.01.2011

Benefits of Eating Fish Strengthened in New Study

>

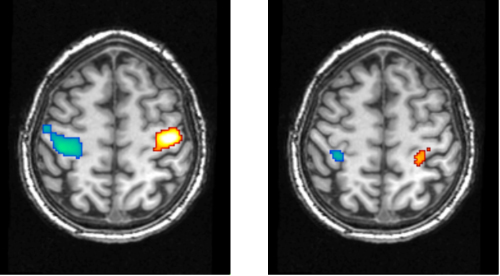

Eating fish once a week is good for brain health, as well as lowering your risk of developing Alzheimer's disease and MCI (Mild Cognitive Impairment), researchers from the University of Pittsburgh School of Medicine explained at the annual meeting of RSNA (Radiological Society of North America), Chicago, yesterday.

Cyrus Raji, M.D., Ph.D. said:

"This is the first study to establish a direct relationship between fish consumption, brain structure and Alzheimer's risk. The results showed that people who consumed baked or broiled fish at least one time per week had better preservation of gray matter volume on MRI in brain areas at risk for Alzheimer's disease."

Labels: cyrus, raji, RSNA, salmon, salmon-fillets